Computer vision in autonomous vehicles turns camera video into real-time road understanding detecting lanes, signs, vehicles, pedestrians, and free space so the car can drive safely.

A human driver makes thousands of tiny visual decisions every minute: a brake light flicker, a pedestrian shifting weight at a curb, a lane line fading under shadow. A self-driving system has to catch those same signals without guessing, and without getting tired.

That’s why Vision AI is often called the “eyes” of autonomous driving. It doesn’t just see objects. It interprets scenes, tracks motion, estimates distance, and feeds those results into planning and control so the vehicle can slow down, stop, change lanes, or steer safely.

In this guide, we’ll break down how self-driving cars see the road, the core computer vision techniques behind that perception, the features it powers in real vehicles, and the edge cases engineers still work hardest to solve.

Vision AI (computer vision) is the technology that allows autonomous vehicles to see and understand the road using cameras and artificial intelligence.

It helps self-driving cars detect pedestrians, other vehicles, traffic lights, and traffic signs from visual data in real time.

In simple terms, computer vision systems turn raw camera images into driving decisions, making autonomous driving possible.

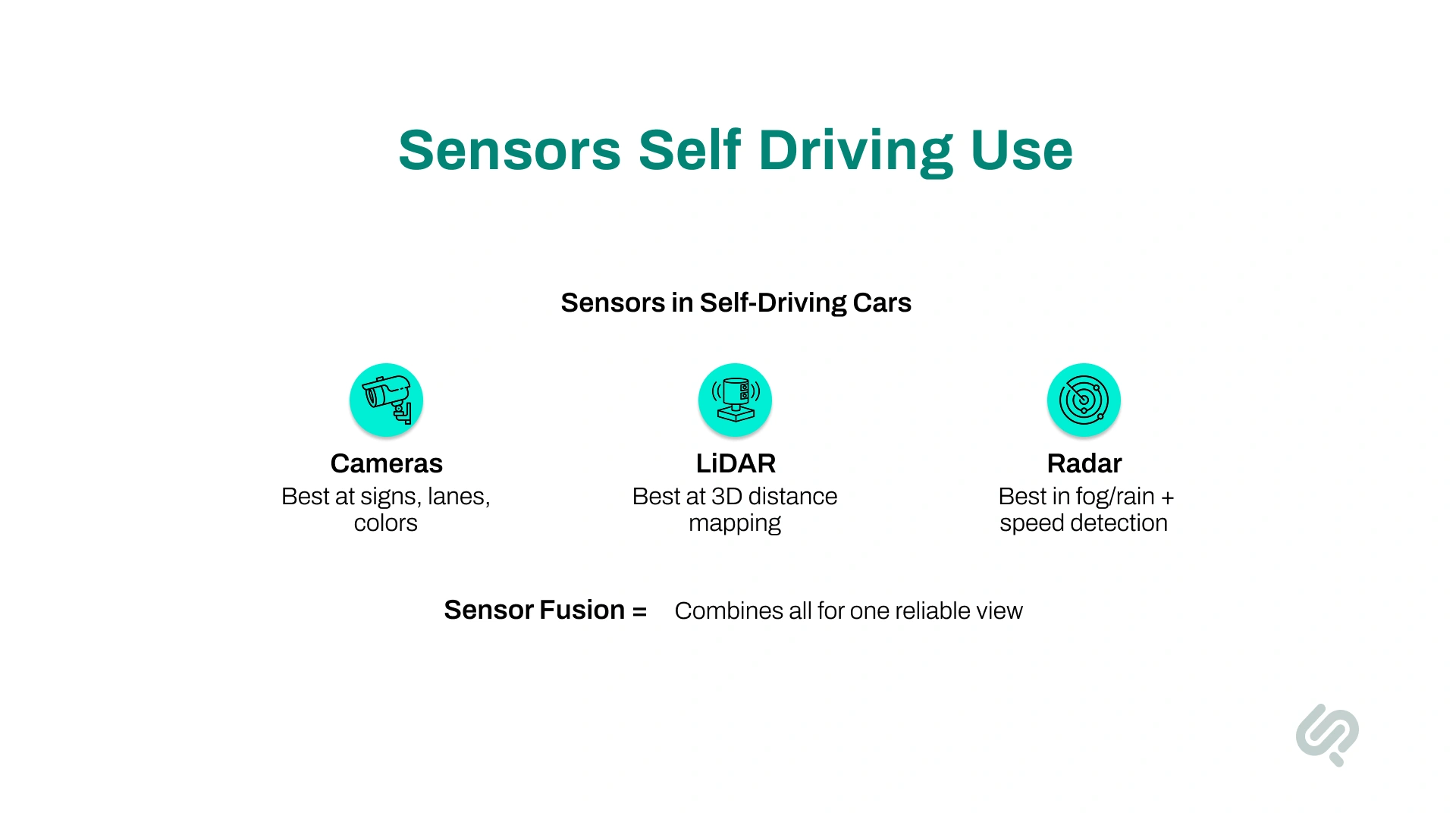

A self-driving car doesn’t rely on just one “eye.” It uses multiple sensors working together to understand what’s happening around it.

Each sensor sees the world differently. When combined, they help the car build a clear and reliable view of its surroundings, which is critical for safe autonomous driving.

Researchers at the University of Texas estimate that tightly spaced platoons of autonomous vehicles could reduce traffic congestion delays by up to 60% on highways, thanks to coordinated driving and smoother flow compared to human-driven cars. (2)

Cameras work like human eyes. They capture images of the road and help the car see important visual details, such as lane lines, traffic lights, traffic signs, pedestrians, and other vehicles.

Cameras are especially good at understanding colors and shapes, which makes them useful for reading road signs and recognizing signals.

Where cameras help most:

Limitations: Cameras depend on good lighting. They can struggle at night, in strong glare, or in heavy rain and fog. On their own, cameras also can’t measure distance very accurately.

LiDAR sends out tiny laser pulses and measures how long they take to return. This allows the car to build a 3D map of nearby objects and understand how far away things are, even in the dark. LiDAR is very accurate at measuring distance and detecting the shape of objects.

Where LiDAR helps most:

Limitations: LiDAR systems are expensive and add cost to the vehicle. Their performance can also drop in heavy rain, fog, or snow. The hardware itself is bulky, though newer versions are becoming smaller and cheaper.

Radar uses radio waves to detect objects and measure their speed. It works well in poor visibility, such as rain, fog, dust, or darkness, and is a key feature in some of the best weather apps.

Radar is especially good at telling how fast another vehicle is moving and how far away it is.

Where radar helps most:

Limitations: Radar cannot clearly identify what an object is. It can tell that something is there and moving, but not whether it’s a pedestrian, a sign, or a pole.

No single sensor is perfect. That’s why self-driving cars use sensor fusion, which means combining data from cameras, LiDAR, and radar to create one complete understanding of the road.

Think of sensor fusion as cross-checking:

When one sensor struggles, the others help fill in the gaps. This makes autonomous driving more reliable, especially in challenging conditions such as bad weather or busy city streets.

Different companies use different sensor setups:

There is still debate about which approach is best. However, most experts agree on one thing: Using multiple sensors together (sensor fusion) is essential for building safe and reliable self-driving vehicles.

The automotive computer vision AI market was estimated at about $1.9 billion in 2025 and is expected to grow to $8.9 billion by 2035 at a CAGR near 16.7% — driven by rising demand for advanced perception systems in vehicles. (3)

Most people think the hard part is “detecting objects.” In real autonomous driving, the bigger challenge is staying reliable in the long tail—rain glare, construction chaos, unusual road behavior, and partially hidden objects—without adding delay.

“Great perception isn’t just accuracy. It’s consistency in edge cases, low latency, and strong validation so the car behaves safely every time.”

— Hammad Maqbool, AI & Prompt Engineering Lead

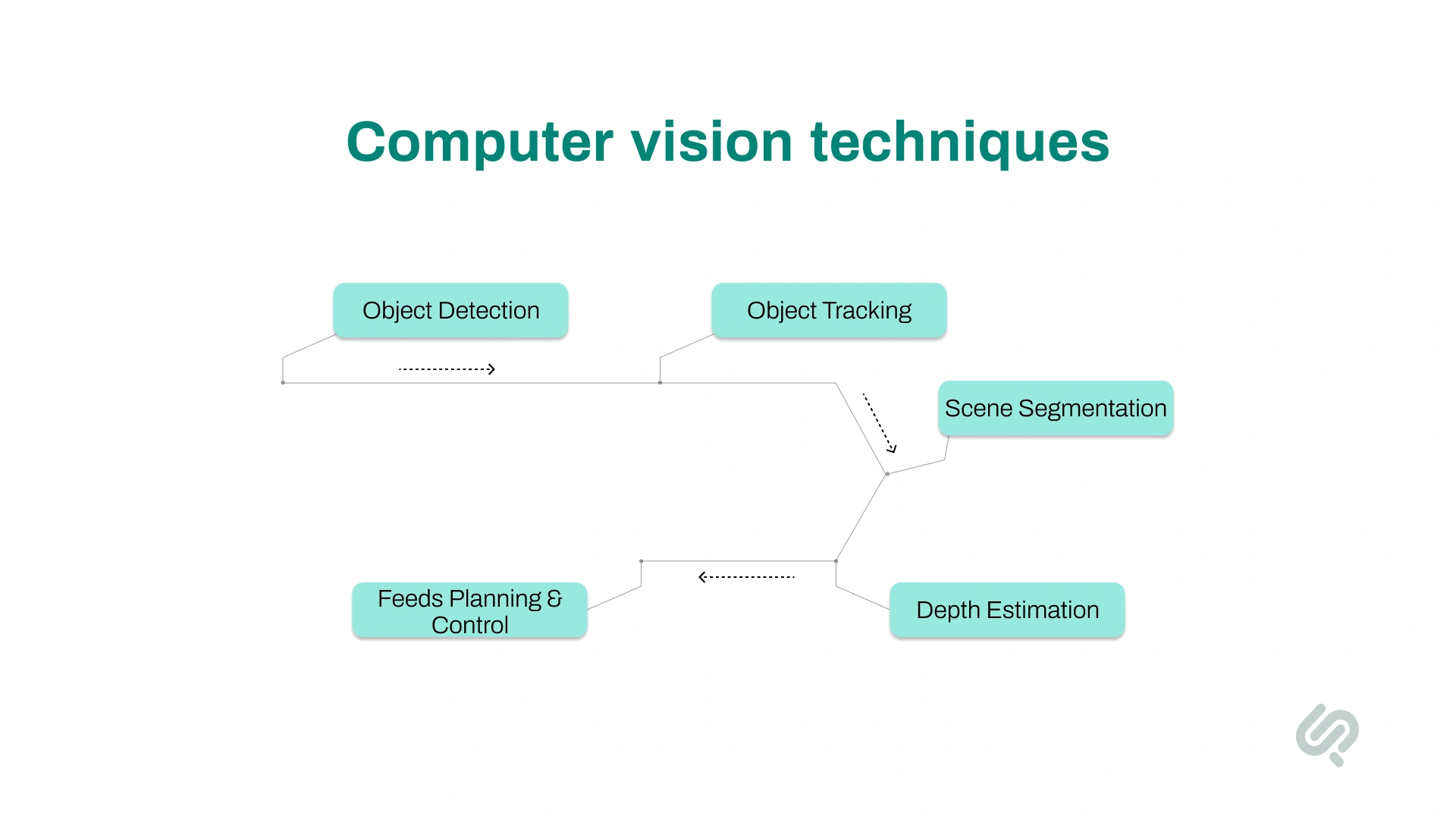

Once a self-driving car captures images using cameras and sensors, the next step is understanding what it sees. This is done using computer vision algorithms that analyze visual data in real time.

Here are the main techniques self-driving cars use:

This is how the car identifies its surroundings. Self-driving cars use object detection to identify and label objects in their surroundings, such as other vehicles, pedestrians, cyclists, traffic signs, lane markings, animals, and road obstacles.

The system draws boxes around objects and assigns labels like “car,” “person,” or “stop sign.”

What this helps with:

Why it matters:

Object detection gives the car a live map of “what is where” on the road. This allows the vehicle to make safe decisions, like slowing down when a pedestrian is nearby or stopping at a red light.

This is how the car follows moving objects over time. Once an object is detected, the car tracks its movement across multiple moments.

For example, if a person is crossing the road or another car is changing lanes, the system keeps track of where that object is moving.

What this helps with:

Why it matters:

Tracking helps the car predict what might happen next. If a cyclist is moving beside the car, the system can estimate where the cyclist will be shortly and avoid turning into their path. This gives the vehicle short-term “foresight” to react safely.

Semantic segmentation labels pixels by class (road, sidewalk, vehicle).

This is how the car understands the entire road scene, not just individual objects. Instead of only drawing boxes around objects, semantic segmentation labels every part of the image.

The system marks areas as road, sidewalk, vehicle, pedestrian, building, or sky. This helps the car understand which areas are safe to drive on and which are not.

What this helps with:

Why it matters:

This gives the car a clear picture of free space versus obstacles. Even if an object is unclear, the system can still mark it as a roadblock.

This leads to smoother driving, better lane-keeping, and safer navigation in busy streets.

This is how the car figures out how far away things are. Some self-driving systems estimate distance using cameras alone, especially when LiDAR is limited or not used.

By comparing images from two cameras (like human eyes) or using AI models, the system can estimate how far away objects are.

Depth estimation depends on camera calibration and synchronization. If cameras are slightly misaligned, dirty, vibrating, or out of sync, distance estimates can drift. That’s why production AV systems constantly monitor calibration health and camera quality to keep perception stable.

What this helps with:

Why it matters:

Accurate distance measurement is critical for safety. The car must know whether an object is very close or far away to brake, slow down, or change lanes at the right time.

When combined with object detection, depth estimation helps build a 3D understanding of the environment.

Now that we’ve covered how computer vision works, let’s look at what it enables self-driving cars to do.

These are the real-world features powered by the car’s “digital vision”, the visible behaviors that make autonomous driving possible and safer on everyday roads.

Here are the main computer vision use cases in autonomous vehicles:

This is how the car stays in its lane. Computer vision systems detect lane lines on the road and help the vehicle stay centered within its lane.

Even when lane markings are faded, broken, or partly covered, the system can often infer where the lane is by using context from the road layout.

What this helps with:

Why it matters:

Staying in the correct lane is one of the most basic and critical parts of safe driving. Lane detection helps prevent drifting into other lanes and reduces the risk of side-swipe accidents.

This is how the car follows road rules. Computer vision reads traffic signs (speed limits, stop signs, yield signs) and understands traffic lights (red, yellow, green).

The system can recognize signs even when they are slightly damaged, dirty, or viewed from an angle. It can also detect temporary construction signs and electronic signs.

What this helps with:

Why it matters:

Recognizing signs and signals allows the car to follow traffic laws just like a human driver. This is essential for safe and legal autonomous driving in cities and on highways.

This is how the car avoids crashes. The vision system detects other vehicles, pedestrians, cyclists, animals, and unexpected objects on the road.

This allows the car to react quickly by slowing down, steering away, or braking to avoid a collision.

What this helps with:

Why it matters:

Fast and accurate detection helps the car react in milliseconds, often faster than a human driver. This reduces the risk of accidents and supports life-saving features like automatic emergency braking.

This is how the car follows traffic smoothly. Using computer vision (often combined with radar), the car monitors the vehicle ahead and maintains a safe following distance.

If the car in front slows down, the autonomous system adjusts speed automatically. It also detects when another vehicle cuts in front and responds smoothly.

What this helps with:

Why it matters:

This keeps traffic flow smooth and reduces sudden braking. It also improves comfort and safety, especially during highway driving and stop-and-go traffic.

This is how the car understands human behavior on the road. Beyond detecting people, some systems can interpret pedestrian behavior and gestures.

For example, the system may notice when someone is about to cross the street or recognize hand signals from traffic police at intersections.

What this helps with:

Why it matters:

Understanding human behavior helps the car act more naturally and safely in busy city environments where people don’t always follow perfect rules.

This is how the car knows where it can safely drive. Computer vision helps identify which parts of the scene are drivable road and which parts are obstacles.

By combining scene understanding and depth estimation, the car builds a local map of the road, including curbs, lane geometry, potholes, and speed bumps.

What this helps with:

Why it matters:

Knowing where the road is free and safe allows the car to drive smoothly without sudden stops or unsafe maneuvers.

This is how the car parks itself. Many vehicles use 360-degree camera views to assist with parking. In autonomous mode, computer vision detects parking spaces, curbs, and nearby vehicles to guide the car into parking spots safely.

What this helps with:

Why it matters:

Low-speed driving and parking are common sources of small accidents. Vision-based parking reduces bumps, scratches, and parking stress for drivers.

This is how self-driving systems keep improving over time. As cars drive, their vision systems collect large amounts of visual data.

This data is used to train and improve computer vision models, helping them learn from real-world driving situations, including rare or unusual scenarios.

What this helps with:

Why it matters:

Every mile driven helps the system learn. This continuous learning loop is key to improving safety, handling edge cases, and making autonomous vehicles more reliable over time.

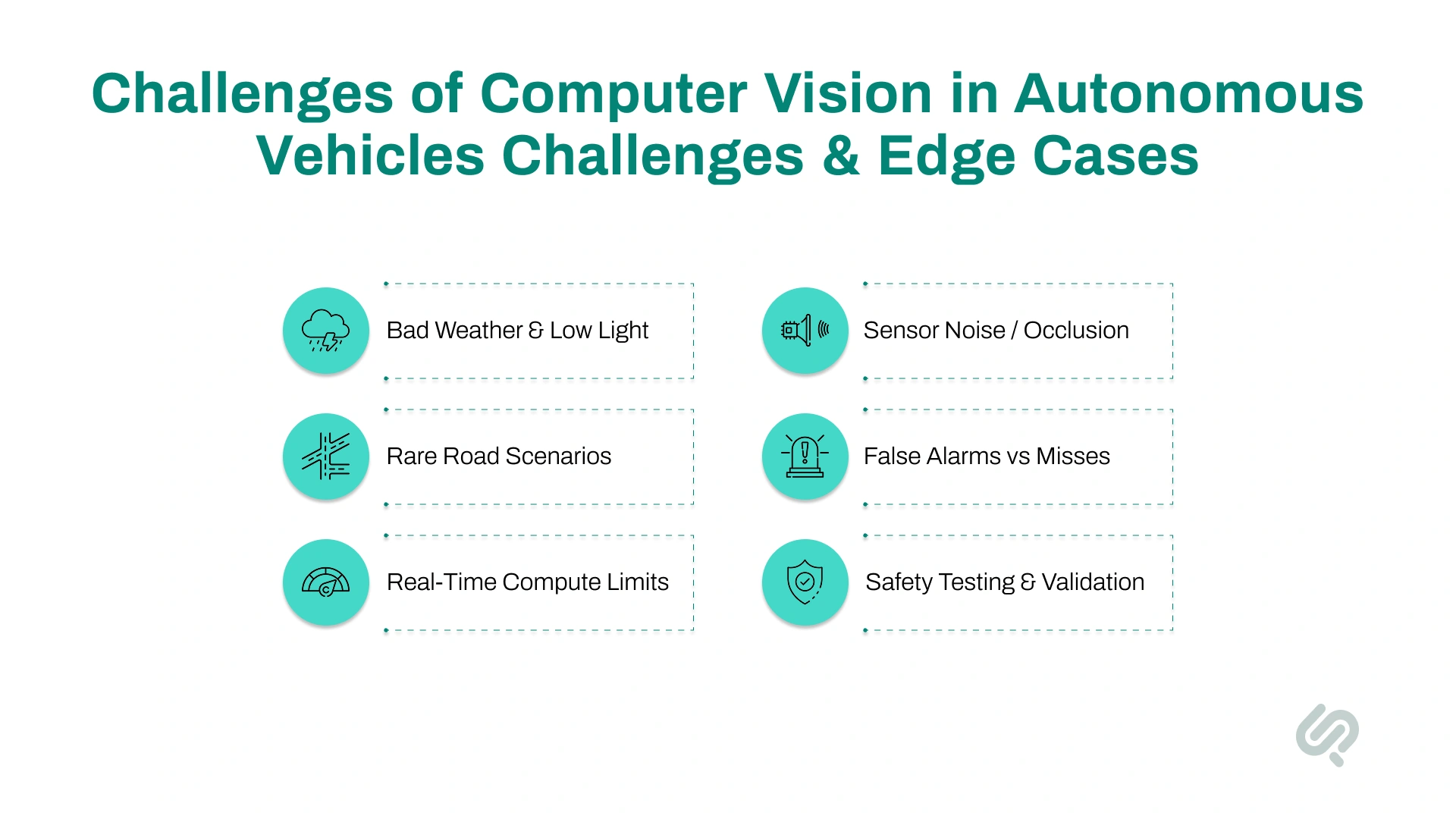

Building reliable computer vision for autonomous vehicles is hard because the real world is unpredictable. Roads, weather, people, and environments constantly change.

A 2024 study found that autonomous vehicles were about 5.25 times more likely to be involved in a crash during dawn or dusk conditions and nearly twice as likely when making turns, highlighting specific scenarios where current perception systems still struggle. (4)

Here are the main challenges self-driving cars still struggle with, explained simply:

Bad lighting and weather can confuse cameras. Bright sunlight, glare, night driving, rain, fog, and snow can hide lane lines, road signs, and pedestrians.

Why it’s a challenge:

Cameras depend on clear visibility. Poor conditions reduce accuracy and increase the risk of missing important objects.

Each sensor has weaknesses. Cameras can be blocked, LiDAR struggles in heavy rain or snow, and radar lacks visual detail.

Why it’s a challenge:

If one sensor fails or gives bad data, the system must rely on others. Adding backups improves safety but increases cost and system complexity.

Self-driving cars may face unusual situations they weren’t trained on, such as:

Why it’s a challenge:

AI systems learn from past data. They can struggle with rare situations they’ve never seen before.

Sometimes the system sees danger when there is none, or fails to see real hazards.

Why it’s a challenge:

False alarms can cause unnecessary braking. Missed detections are more dangerous and can lead to accidents. Balancing safety without overreacting is difficult.

Self-driving cars must process massive amounts of camera and sensor data instantly.

Why it’s a challenge:

Delays of even a fraction of a second can affect safety. High-speed computing is required to make driving decisions in real time.

Proving that vision systems are safe in every possible scenario is extremely difficult, similar to the broader emphasis on software quality assurance principles in modern engineering.

Why it’s a challenge:

No system can be tested for every real-world situation. Rare failures can still happen, even after extensive testing.

Many people still don’t trust self-driving cars.

Why it’s a challenge:

Even safe technology needs public confidence. High-profile accidents slow adoption and increase fear.

High accuracy isn’t enough in autonomous driving. Vision AI must be safe in real-time traffic, under pressure, and across thousands of scenario types. That’s why testing and validation are a full discipline in autonomous vehicle development.

Teams test vision systems against specific driving scenarios, such as:

This checks whether object detection, lane detection, and traffic sign recognition stay reliable.

Simulation allows:

This is key for improving long-tail performance without waiting months for real-world data.

Before public roads, teams validate in controlled environments:

Then comes limited real-world testing with strict safety rules.

Every model update must prove it didn’t introduce new failures:

Computer vision models only learn what they see in training. In autonomous driving, that means the system needs massive, diverse driving data. Not just normal daytime roads, but the messy real world too.

Cars collect camera and sensor data from real streets. This helps models learn:

Why it matters: Real-road data teaches the model how driving actually looks in production, which improves real-time perception and reduces missed detections.

Some scenarios are too rare (or too risky) to collect at scale, like:

Simulation helps generate these edge cases safely and repeatedly, which improves long-tail reliability in autonomous vehicle AI systems.

A strong AV dataset includes variation in:

Bottom line: Better training data leads to safer perception, stronger motion prediction, and fewer surprises on real roads.

For a computer vision model, data isn’t useful until it’s labeled. In autonomous driving, labeling turns raw driving footage into training truth, so the model learns what to detect and how to behave.

Different driving tasks require different labels. Here are some common annotation types in autonomous vehicle computer vision:

If labels are inconsistent, the model learns confusion:

That leads to real-world issues like false braking, missed hazards, and unstable driving decisions.

Computer vision is moving fast because sensors, AI models, and onboard chips are improving together. The next wave of computer vision in autonomous vehicles will be defined by safer perception in more conditions, stronger sensor fusion, and better real-time decision-making.

What’s changing next (key trends):

Vision AI isn’t only for self-driving cars. It helps businesses understand images and video, then act on what the system “sees.”

Where it helps most:

Computer vision is the core technology that makes autonomous driving possible. By giving cars the ability to see, understand, and react to the road in real time, Vision AI is transforming safety, mobility, and how vehicles interact with the world.

While challenges like extreme weather and rare edge cases still limit full autonomy, rapid advances in sensors, AI models, and computing power are closing the gap.

As these systems mature, self-driving cars will move from experimental deployments to practical, everyday transportation, reshaping how people travel, commute, and experience the road.

Computer vision in autonomous vehicles is the use of AI to analyze camera and sensor data so a self-driving car can detect lanes, traffic signs, pedestrians, and other vehicles in real time.

Self-driving cars use cameras, radar, and sometimes LiDAR to capture visual and distance data, then apply computer vision algorithms to understand their surroundings and make driving decisions.

Computer vision is essential, but most autonomous systems combine it with radar and LiDAR for better reliability, especially in poor weather or low-visibility conditions.

Major challenges include bad weather, low light, rare edge cases, false detections, and ensuring real-time processing with high accuracy in complex environments.

Fully self-driving cars are still being tested in limited areas today. Wider adoption will depend on improvements in Vision AI, safety validation, regulations, and public trust over the next several years.