If your model is underperforming, the problem is often not the algorithm. It is the data behind it.

Information sets used in machine learning are the data collections a model uses to learn, improve, and make predictions. In most machine learning workflows, these information sets are simply called machine learning datasets.

They include the data used for training, validation, and testing, along with the supporting data used to clean, label, and evaluate model performance.

When the right information sets are missing, incomplete, biased, outdated, or poorly prepared, even a strong model can fail in production. That is why choosing and preparing the right dataset is one of the most important parts of any machine learning project.

In this guide, you will learn what information sets used in machine learning actually are, the main dataset types, how to choose the right data for your use case, where to find quality datasets, and when public data is no longer enough.

Information sets used in machine learning are the datasets a model uses for training, validation, and testing. They help the model learn patterns, tune performance, and measure how well it works on new data.

In this context, yes. The phrase information sets used in machine learning refers to the data collections commonly known as machine learning datasets.

Most projects need at least a training set and a test set. In practice, strong projects usually use training, validation, and test datasets.

The most common types are structured vs unstructured, labeled vs unlabeled, balanced vs imbalanced, and time-series or real-time datasets.

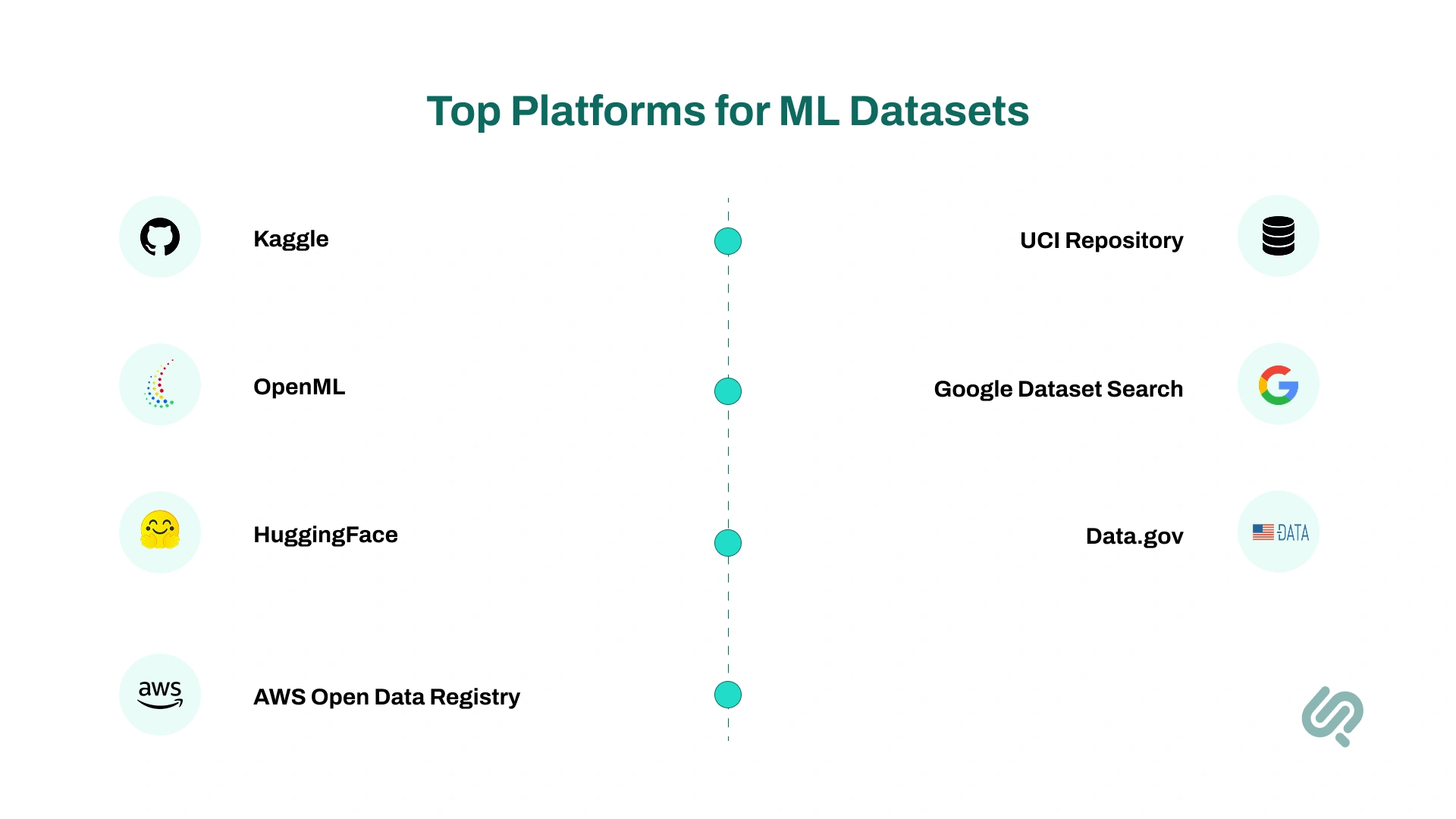

Teams often use Kaggle, UCI Machine Learning Repository, OpenML, Hugging Face, Google Dataset Search, Data.gov, and AWS Open Data.

Teams usually need custom dataset preparation, data labeling services, or machine learning services when public data does not match their industry, compliance needs, edge cases, or production goals.

Information sets used in machine learning are structured or unstructured collections of data used to train, validate, and test machine learning models. In standard ML language, these are usually called datasets.

A dataset may contain:

These information sets are the foundation of nearly every ML system, whether you are building a fraud detection model, recommendation engine, medical classifier, forecasting tool, or natural language application.

The model learns from patterns inside the dataset. If the data is relevant, well-labeled, representative, and clean, the model has a far better chance of performing well. If the dataset is weak, the model usually becomes unreliable, biased, or hard to scale.

Most machine learning projects rely on three core information sets.

The training set is the main dataset the model learns from. It contains the examples used to identify patterns, relationships, or decision boundaries.

The validation set helps you tune the model without touching the final test data. It is useful for choosing features, adjusting hyperparameters, and comparing model variants.

The test set is held back until the final stage. It gives you a more honest view of how the model may perform in production.

A lot of weak ML projects fail here. They either skip validation, test too early, or allow leakage between datasets. That creates inflated results and false confidence.

Whether you're building deep learning models, rule-based classifiers, or experimental regression systems, your results are only as good as your data. That means selecting and preparing the right training data is just as important as choosing the right algorithm.

Let’s break down a few essentials:

(A) Raw data vs training data

Raw data refers to unprocessed information. Think of emails, sensor logs, or medical scan images. It must be cleaned, labeled, and formatted before it becomes usable training data.

(B) Types of information sets

In most ML workflows, you’ll use three core datasets:

(C) Common data types and domains

Examples include:

(D) Data quality matters

Issues like missing values, inconsistent data types, or majority class imbalance can reduce model accuracy and lead to biased outcomes.

Not all data is created equal. The type of information set you use can directly impact how well your machine learning models learn, adapt, and perform across different tasks.

Below are the key types of data sets in machine learning, each with distinct characteristics and use cases.

Structured data is organized and easy for machines to read. Examples include spreadsheets, CRM exports, SQL tables, and transaction logs.

Unstructured data includes text, images, video, audio, PDFs, and emails. This data is richer, but it usually needs more preprocessing before model training.

Use structured datasets for:

Use unstructured datasets for:

Many machine learning development services specialize in converting unstructured data into a format suitable for training deep learning models.

Labeled data includes the correct answer or target output. Example: customer reviews marked as positive, neutral, or negative.

Unlabeled data has no target label. It is often used for clustering, anomaly detection, embeddings, or pretraining.

Labeled datasets are essential for supervised learning. Unlabeled datasets are useful when you need pattern discovery, similarity analysis, or representation learning.

This distinction plays a big role in building custom pipelines through AI PoC & MVP strategies.

A balanced dataset has a relatively healthy distribution across classes.

An imbalanced dataset has one class that heavily outweighs the others.

This matters a lot in use cases like:

If your positive cases are rare, a model can look accurate while still missing the outcomes that matter most.

Time-series datasets include data arranged over time. Real-time datasets update continuously.

Examples include:

Information sets require extra care around sequence order, lag, missing timestamps, and drift.

Choosing the right information set is not just about finding any dataset that looks similar. You need to judge whether it is truly fit for the problem you are solving.

Start with the actual ML task. Are you doing classification, regression, ranking, recommendation, forecasting, detection, or clustering?

A dataset that works for one task may be a poor fit for another.

Ask whether the dataset reflects your real-world environment. A generic benchmark dataset may help with experimentation, but it may not reflect your users, your products, your workflow, or your edge cases.

Review the dataset for:

Poor-quality data creates poor-quality models.

If the project depends on supervised learning, the labels must be trustworthy. Bad labels can quietly damage performance even when everything else looks correct.

Make sure the dataset covers both common and rare outcomes. If not, the model may fail on the exact cases you care about most.

Before using a dataset in a business setting, check:

This matters even more in healthcare, fintech, legal, and enterprise AI.

A good demo dataset is not always a good production dataset.

If public data does not match your environment, this is usually the point where teams need custom dataset preparation, data labeling services, or machine learning consulting.

Once you have the right information set, the next step is preparing it properly.

Remove duplicates, standardize formats, fix invalid entries, and decide how to handle missing values. Clean data makes everything downstream easier.

Feature engineering helps convert raw information into signals the model can learn from more effectively. This may include aggregations, time windows, ratios, encoded categories, or derived variables.

If you are building a supervised model, labeling is not a minor task. Clear annotation rules, quality review, and domain expertise all improve dataset reliability.

Create your training, validation, and test sets early and keep them separate. Do not let information from the test set influence training decisions.

Leakage happens when future information, duplicate records, or hidden shortcuts make the model look better than it really is. This is one of the biggest reasons ML projects disappoint after deployment.

Data leakage can inflate model performance by up to 30%. (2)

If the data is skewed, address it before training through resampling, weighting, threshold tuning, or better data collection.

A strong dataset should be documented clearly:

This makes collaboration easier and improves long-term maintainability.

Before you start training, ask these questions:

If the answer is no to several of these, the issue is probably not model selection yet. It is dataset readiness.

If you need high quality datasets to train or test your models, here are the top sources for public datasets and open data, organized by category.

These platforms give you access to new datasets across industries (from healthcare to computer science to artificial intelligence) to help you move faster and smarter in your ML journey.

This is one of the most important decisions in real ML delivery.

This is often where companies move from simple experimentation into machine learning services, AI model development services, or machine learning consulting.

Once public data stops matching the business problem, the next step is usually a better data strategy, not just more model tuning.

Teams usually need expert support when they hit one or more of these issues:

At this point, custom dataset preparation, data labeling services, and machine learning consulting can help move the project forward faster and with less risk.

The right information sets make different machine learning applications possible.

Tasks like sentiment analysis, machine translation, and building smart AI chatbots for e-commerce all depend on large, diverse, and high-quality text datasets.

This information helps train models to understand context, intent, and tone in human language.

NLP tasks are essential across industries, especially those investing in machine learning services to improve digital experiences.

Computer vision tasks use image-based information sets to power applications like object detection, facial recognition, and quality inspection in manufacturing.

These image datasets also support AI in sports automation and industrial automation, enabling real-time event recognition and equipment monitoring.

Machine learning in healthcare is being used for diagnostics, treatment recommendations, and medical image classification. The datasets used are often highly sensitive and complex.

Due to the nature of healthcare, these datasets must handle missing values, protect patient privacy, and account for demographic biases.

For self-driving cars and smart traffic systems, datasets include timestamped sensor readings, video feeds, and vehicle telemetry to train models on navigation, collision avoidance, and route optimization.

These systems require both accurate models and responsive AI automation pipelines to manage streaming data efficiently.

Voice-based applications (from voice assistants to transcription tools) depend on clean, labeled audio datasets to recognize and interpret spoken language accurately.

This domain supports use cases ranging from call center automation to embedded devices using free AI animation tools with voice sync features.

Choosing the right dataset is only part of the process. Teams also need the right tools and programming languages to clean, organize, validate, and prepare that data for machine learning workflows.

Different programming languages support different parts of the pipeline. Some are better for fast experimentation, while others are better for large-scale data engineering or enterprise processing.

For most machine learning projects, teams move fastest with Python + SQL because they cover both dataset preparation and model development well.

Larger data platforms may also use Scala or Spark for distributed processing. In practice, language choice affects not just development speed, but also hiring, tooling, maintenance, and delivery cost.

Creating or improving datasets is a vital part of building better machine learning models. This is also recognized by developers worldwide who regularly contribute to dataset repositories.

You can collect data from internal systems, user workflows, logs, forms, devices, documents, or approved external sources.

Many strong datasets come from combining multiple sources into a cleaner, more useful structure.

A dataset becomes far more valuable when the annotation standards are clear and repeatable.

Check whether important user groups, scenarios, and edge cases are underrepresented.

Good dataset versioning helps teams track what changed, why it changed, and which model was trained on which data.

Open-source contributions, shared benchmark datasets, and internal dataset libraries can all make future ML work faster and more reliable.

Information sets in generative AI projects are related to traditional machine learning datasets, but they often look different.

In retrieval-augmented generation, the main information set is the retrieval corpus. This may include:

These sources are cleaned, chunked, indexed, and retrieved to support grounded responses.

Teams often create prompt and response examples to improve how an LLM handles business tasks such as support, classification, writing, extraction, or workflow guidance.

Evaluation sets help test quality, consistency, relevance, and failure cases. They are essential for tracking whether the system is actually improving.

Information sets help define what the system should avoid, what it can say, and how it should behave around compliance, tone, privacy, and risk.

In AI workflow automation, information sets may also include:

This is where generative AI development and traditional machine learning data strategy start to overlap. The better these information sets are structured, the more reliable the automation becomes.

81% of organizations say data is core to their AI strategy (5). But the true value of a data set lies in how it's used.

From detecting fraud to powering self-healing machines, here are real-world examples where machine learning depends on high-quality data.

When you use well-structured and relevant information sets, you give your models the foundation they need to deliver real results.

From higher accuracy to faster development, here’s what quality datasets can unlock for your ML projects.

Choosing the right information sets is only the beginning. To make a machine learning system work in the real world, you also need the right data preparation, model strategy, evaluation workflow, and deployment plan.

At Phaedra Solutions, we help teams move from raw data to reliable delivery through machine learning services, AI model development services, generative AI development, and AI workflow automation.

Whether you need help with custom dataset preparation, data labeling, model evaluation, or production planning, we can help you turn scattered information into a machine learning system that performs reliably in production.

Information sets are grouped based on their role, format, and use case.

1. Training set – used to teach the model how to learn

2. Validation set – used to fine-tune model parameters

3. Test set – used to evaluate model performance

4. Labeled data – includes tags or outcomes for supervised learning

5. Unlabeled data – used in unsupervised learning, like clustering

6. Structured data – organized in tables or predefined formats

7. Unstructured data – includes text, images, audio, etc.

8. Time-series data – indexed with time stamps, used for forecasting

Start by matching the dataset to your task, like natural language processing or computer vision. Then check its size, balance, quality, and relevance. The better the match, the more accurate your machine learning models will be.

You can access open, ready-to-use datasets on trusted platforms.

1. Kaggle – competitions, datasets, and kernels for ML

2. OpenML – searchable hub for research-friendly datasets

3. UCI Repository – classic datasets widely used in education and research

4. HuggingFace – specialized in NLP, CV, and deep learning tasks

5. Google Dataset Search – indexes over 25M datasets from global sources

Raw data is unprocessed, straight from sensors, files, or logs. Training data is cleaned, labeled, and formatted to train your model effectively. Converting raw data to training-ready form is often the most time-consuming step.

Low-quality data causes poor performance and unreliable results.

1. Inaccurate predictions – leads to bad decisions in real-world use

2. Bias and discrimination – unfair outcomes from unbalanced data

3. Overfitting – model performs well on training but fails on new data

4.Wasted development time – bad data means retraining or starting over